Upgrading from VMware Cloud Foundation (VCF) 5.2.x to VCF 9.1 is not just a version upgrade. It is a major architectural transformation that introduces a new management model, new lifecycle services, centralized fleet operations, and a redesigned private cloud operational framework.

With VCF 9.1, VMware has introduced a modernized management

architecture built around VCF Management Services, Fleet Lifecycle, unified

operations, enhanced automation, and scalable lifecycle management

capabilities. Organizations planning this transition should approach the

upgrade carefully with proper planning, validation, and sequencing.

In this blog, I will walk through the complete upgrade flow

from VCF 5.2.x to VCF 9.1 based on the official upgrade workflow, operational

experience, and the latest VCF 9.1 architecture updates.

Understanding the Upgrade Journey

Before starting the upgrade, it is important to understand

that this is a phased transition.

VCF 9.1 introduces several architectural changes:

- Introduction

of VCF Management Services

- Replacement

of Aria Suite Lifecycle functionality

- Fleet-based

lifecycle management

- Unified

management services runtime

- Enhanced

lifecycle orchestration

- New

operational framework for Automation and Operations

- Improved

upgrade orchestration

- Centralized

identity and policy management

If your environment is currently running anything earlier

than VCF 5.2.x, you must first upgrade to VCF 5.2.x before proceeding to VCF

9.1.

What Changes in VCF 9.1?

One of the biggest changes introduced in VCF 9.1 is the

separation of management services from traditional appliance-based

architecture.

VCF 9.1 introduces:

- Shared

Services Runtime

- Fleet

Lifecycle Management

- Centralized

Binary Management

- Unified

Identity Services

- Modernized

Operations Architecture

- Enhanced

Automation Framework

- Real-Time

Operational Insights

- Simplified

Lifecycle Management

According to VMware’s latest VCF 9.1 announcements, VCF can

now scale up to 5,000 ESXi hosts and support upgrades across 256 clusters

simultaneously.

Pre-Upgrade Planning

Before beginning the upgrade process, perform a detailed

assessment of the environment.

Validate the Following

Hardware Compatibility

Ensure all components are supported for VCF 9.1:

- ESXi

hosts

- vSAN

controllers

- NICs

- CPUs

- Storage

controllers

- NSX

Edge appliances

DNS and NTP Validation

Many VCF upgrade failures are related to:

- Improper

forward DNS

- Missing

reverse PTR entries

- Time

synchronization issues

Ensure:

- All

components resolve correctly

- Forward

and reverse DNS records exist

- NTP

is synchronized across all appliances

Backup Requirements

Take backups and snapshots of:

- SDDC

Manager

- vCenter

Server

- NSX

Managers

- Aria

Components

- External

databases

- Automation

appliances

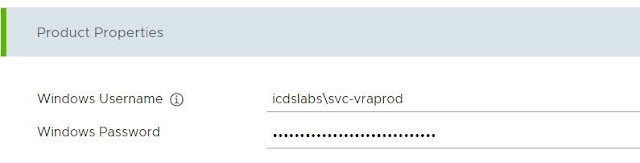

Password and Certificate Validation

Validate:

- Certificate

validity

- Expired

passwords

- Locked

service accounts

- SSH

accessibility

Review Interoperability Matrix

Confirm all component versions are compatible with VCF 9.1Upgrade

Sequence Overview

The VCF 5.2.x to 9.1 upgrade process follows a strict

sequence.

The high-level workflow is:

- Upgrade

Aria Operations

- Upgrade

Protection Components

- Upgrade

Avi Load Balancer

- Upgrade

SDDC Manager

- Deploy

VCF Management Services

- Upgrade

Automation Components

- Upgrade

Network and Security Components

- Upgrade

vCenter

- Upgrade

ESXi Hosts

- Upgrade

Edge Clusters

- Upgrade

Kubernetes Supervisors

- Upgrade

VMware Tools and VM Compatibility

This sequence is critical and should not be modified.

Step 1 – Upgrade Aria Operations to VCF Operations 9.1

VCF Operations becomes a mandatory component in VCF 9.x

architecture.

If Aria Operations already exists:

- Upgrade

it to version 9.1

- Validate

cluster health

- Verify

collectors and cloud proxies

If Aria Suite Lifecycle is managing the environment:

- Upgrade

through Lifecycle Manager

- Validate

product binaries

- Ensure

repository synchronization

During this phase:

- Lifecycle

services begin transitioning toward the new VCF management architecture

- Legacy

lifecycle appliances start getting phased out

VMware notes that VCF Management Services will replace much

of the previous Aria Suite Lifecycle functionality.

Step 2 – Upgrade Protection and Recovery Components

If deployed, upgrade the following:

- vSphere

Replication

- VMware

Live Site Recovery

- vSAN

Data Protection

These components are optional depending on deployment

architecture.

Ensure replication health is clean before proceeding.

Step 3 – Upgrade VMware Avi Load Balancer

If NSX Advanced Load Balancer (Avi) is integrated:

- Upgrade

controllers

- Upgrade

SE groups

- Validate

cloud connectors

- Validate

certificates

Check:

- NSX-T

integration

- VIP

reachability

- DNS

integration

Step 4 – Upgrade SDDC Manager to 9.1

This is one of the most important upgrade stages.

The process includes:

- Download

upgrade bundles

- Run

prechecks

- Resolve

all blocking issues

- Execute

SDDC Manager upgrade

The workflow remains similar to previous VCF releases, but

VCF 9.1 introduces deeper integration with Fleet Lifecycle Management.

Important Validation Areas

Check Bundle Repository

Verify:

- Bundle

availability

- Download

integrity

- Repository

synchronization

Run Upgrade Prechecks

Common failures include:

- DNS

mismatch

- Password

expiration

- Certificate

issues

- NTP

drift

- Resource

shortages

Resolve every error before proceeding.

Step 5 – Deploy VCF Management Services

This is the major architectural transition point.

VCF 9.1 introduces VCF Management Services hosted on a

shared services runtime.

The deployment includes:

- Lifecycle

services

- Software

depot

- Identity

services

- Fleet

management

- Runtime

services

- Log

management

During deployment:

- New

runtime clusters are deployed

- Service

VMs are created

- Licensing

services are configured

According to VMware documentation, the services runtime acts

as the common foundation for lifecycle management and automation services.

Step 6 – Identity Services Migration

Older VMware Identity Manager components are not directly

upgraded.

Instead:

- Identity

Broker 9.1 is deployed

- Authentication

services migrate into the new architecture

- Existing

Identity Manager may still remain temporarily for authentication

dependencies

This is an important operational consideration during

migration.

Step 7 – Upgrade Automation Components

Next, upgrade:

- Aria

Automation → VCF Automation 9.1

- Aria

Orchestrator → VCF Operations Orchestrator

- Aria

Operations for Networks

- HCX

- Log

Management services

VCF 9.1 introduces a more service-oriented automation

architecture.

Key improvements include:

- Shared

runtime integration

- Better

lifecycle control

- Unified

automation framework

- Improved

scalability

Step 8 – Upgrade NSX Components

Once management services are stable:

- Upgrade

NSX Manager cluster

- Validate

cluster synchronization

- Check

transport nodes

- Validate

overlay connectivity

VCF 9.1 changes upgrade sequencing slightly.

The NSX Manager upgrade occurs earlier in the workflow

compared to older releases.

Validation Checklist

- TEP

connectivity

- BGP

status

- Edge

health

- Tunnel

status

- Segment

reachability

Step 9 – Upgrade vCenter Server

Next, upgrade vCenter Server.

The process includes:

- Snapshot

creation

- Compatibility

validation

- Lifecycle

execution

- Service

validation

Post-upgrade validation:

- Cluster

health

- DRS

status

- HA

status

- vSAN

health

- Storage

policy validation

Step 10 – Upgrade ESXi Hosts

ESXi upgrades can now be performed with significantly higher

parallelism in VCF 9.1.

VMware states that VCF 9.1 supports upgrades across up to

256 clusters simultaneously.

ESXi Upgrade Workflow

- Enter

maintenance mode

- Validate

vSAN evacuation

- Upgrade

host image

- Reboot

host

- Exit

maintenance mode

- Validate

cluster health

Step 11 – Upgrade NSX Edge Clusters

Once hosts are upgraded:

- Upgrade

Edge appliances

- Validate

Tier-0/Tier-1 routing

- Check

BGP sessions

- Validate

load balancer functionality

Finally:

- Complete

NSX finalize upgrade task

Step 12 – Upgrade Kubernetes and Supervisor Services

If using Supervisor Clusters or VKS:

- Upgrade

Supervisor clusters

- Validate

namespaces

- Upgrade

Tanzu Kubernetes clusters

- Validate

CSI and CNI operations

VCF 9.1 includes enhanced VKS operational capabilities and

improved cost visibility for Kubernetes workloads.

Step 13 – Upgrade VMware Tools and VM Compatibility

Final cleanup activities include:

- VMware

Tools upgrade

- VM

hardware compatibility upgrade

- vSAN

on-disk format upgrade

- vSAN

File Services upgrade

These should be planned carefully to avoid unnecessary guest

downtime.

Common Upgrade Challenges

DNS Problems

The most common issue in VCF upgrades.

Ensure:

- Forward

lookup works

- Reverse

PTR records exist

- All

FQDNs resolve correctly

Community discussions frequently highlight DNS issues as

major blockers during upgrades.

Certificate Issues

Check for:

- Expired

certificates

- Incorrect

SAN entries

- Trust

chain issues

Resource Constraints

VCF 9.1 introduces additional management services.

Ensure adequate:

- CPU

- Memory

- Storage

- IP

pools

NSX Integration Problems

Validate:

- Manager

connectivity

- Edge

synchronization

- Host

transport nodes

- Segment

health

Best Practices for Production Upgrades

Build a Detailed Upgrade Plan

Document:

- Upgrade

order

- Downtime

windows

- Rollback

procedures

- Validation

checkpoints

Use a Lab Environment First

Always test:

- Upgrade

workflow

- Prechecks

- DNS

configuration

- Certificate

validation

- Service

migration

Validate After Every Stage

Do not rush through sequential upgrades.

Validate:

- Service

health

- Cluster

health

- Workload

functionality

- NSX

status

- vSAN

health

before moving to the next phase.

The upgrade from VCF 5.2.x to VCF 9.1 is one of the most

significant platform transformations in VMware Cloud Foundation history.

VCF 9.1 introduces:

- Modern

lifecycle architecture

- Unified

management services

- Improved

scalability

- Enhanced

automation

- Centralized

operational visibility

- Better

lifecycle orchestration

- Simplified

private cloud operations

While the upgrade process is extensive, proper planning,

validation, and sequencing make the transition manageable and predictable.

Organizations adopting VCF 9.1 gain access to a

significantly modernized private cloud platform designed for both traditional

VM workloads and modern Kubernetes-based applications.

For environments planning long-term private cloud

modernization, VCF 9.1 becomes a foundational operational platform rather than

simply another infrastructure upgrade.

Useful References